Giving Plants a Voice: Designing a Conversational Agent

As artificial intelligence becomes more ingrained into everyday elements of life, our team began to question how AI could be applied to the natural world and helping us better understand plants. As such, we formed this study to in a sense “Give voice to these plants,” and help us learn more about them. Our study combines AI through conversational agents with plant imaging software of common apps and identifiors. Our goal was to merge both into a physical prototype that still allows users to engage with the natural world (without the distraction of an iphone), while also being able to converse with the gadget naturally and recieve in depth, personalized answers that were unique to their specific plant’s needs and conditions.

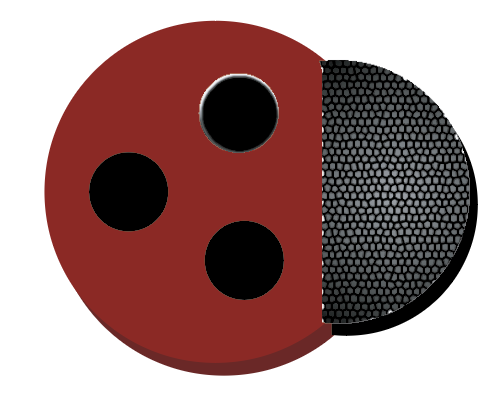

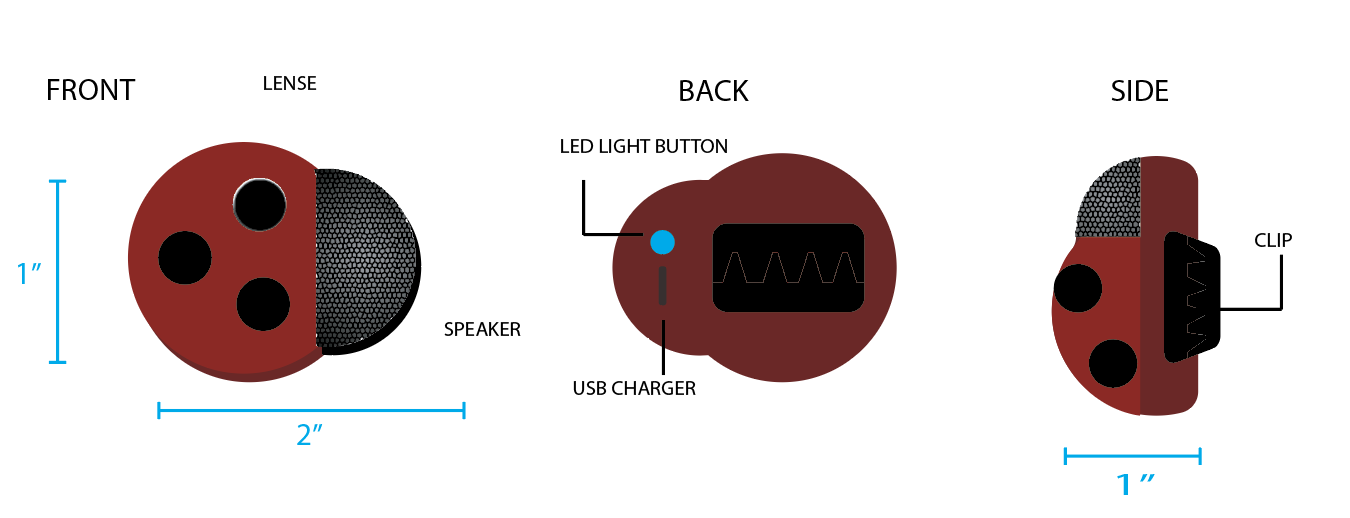

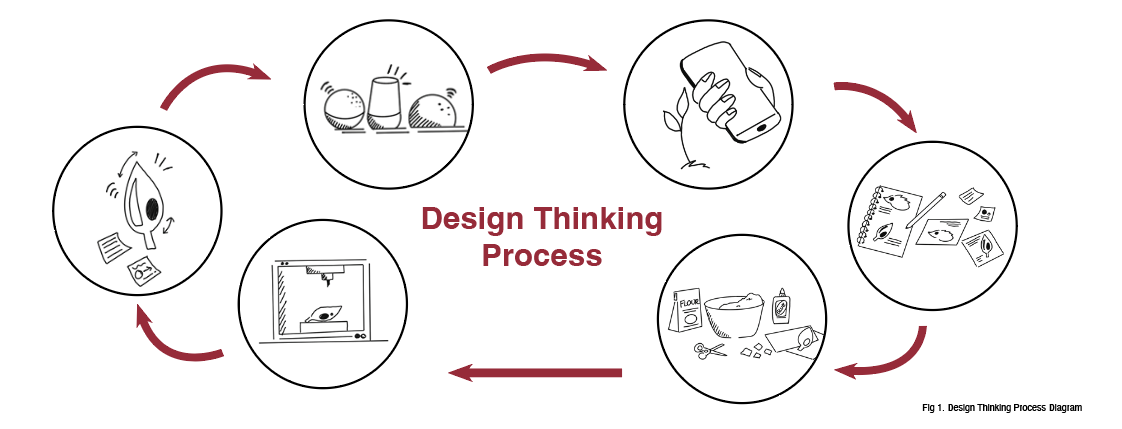

After conducting in depth reasearch into plant identifiers and conversational agents, I began to draft how features of both could manifest into a physical prototype. My ideas began on paper through rough sketches and basic ideation and then gradually took shape through more refined renderings, paper mache and cardboard lo fidelity prototypes, and eventually a 3D printed final prototype. Among the 4 members of our team, we then tested the final prototype using Wizard of Oz and autobiographical testing. From this testing, we were able to identify possible corrections or room for growth in our prototypes, as well as study alternative user interactions.

Methodology:

3D Rendering: